Hello everyone. Today I have something a little atypical to share. I recently built software which I am referring to as “Orbital Oracle”. Essentially, users submit info about their birth to Divine API and the response (containing personalized data from a western natal astrological paradigm) is fed to a locally deployed AI model in my home for summary/delivery.

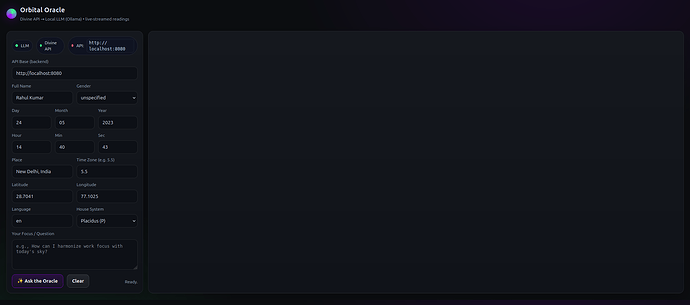

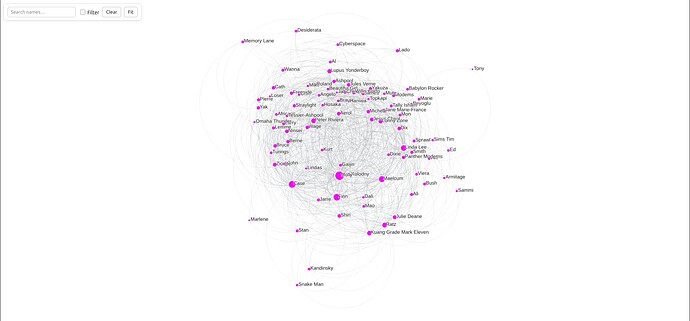

Here is a screenshot of the frontend UI as it stands right now:

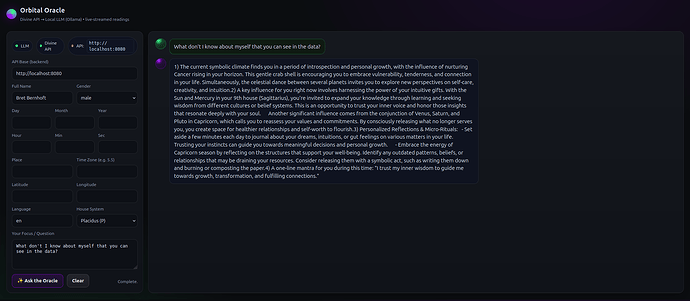

On the left side of the Orbital Oracle application, the user has a form to enter data about themselves. Including an “Ask the Oracle” button. After submitting their info, a chat message will begin printing on the right side of the screen; containing the local AI’s assessment of their astrological data. As seen in the screenshot displayed below:

For context, since my last post, I deployed several AI models on a computer within my private LAN. I am now actively learning how to program software which can interact with these artificial intelligences. Projects such as the one featured in this post.

Details About How “Orbital Oracle” Functions

First I activate a Python script (a Flask server) on my main computer, acting as the connector between Divine API, the user and the LLM in my homelab. On the same computer, I next launch a frontend UI written in JavaScript, CSS and HTML, enabling a user to submit the place, date and time of their birth.

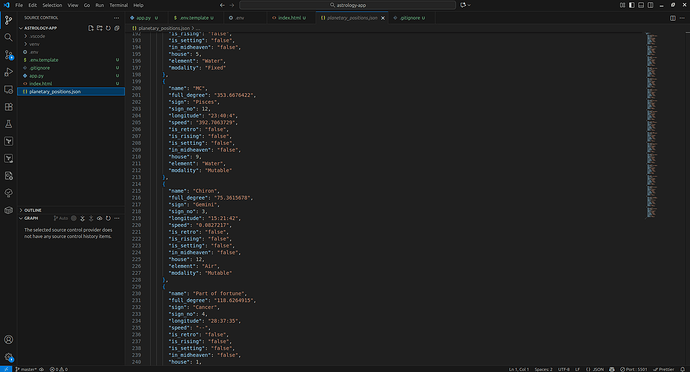

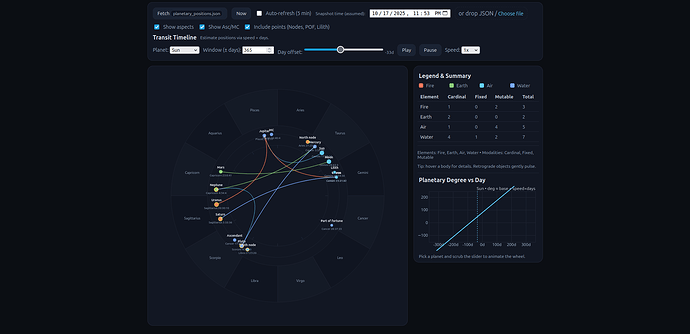

Once that information is entered, the user presses a submit button and off the data goes to 5 separate Divine API endpoints; including planetary positions, moon phases, house cusps and others. Each returning numeric values representing how an individual is weighed and measured in western natal astrology based on the location and moment of their birth.

That data, along with a well-structured prompt and deeper context, is passed to an AI model on another computer in my homelab for review. Said artificial intelligence processes the values returned from Divine API, looking for patterns and likelihoods. Anything of interest. Finally delivering a chat response to the user for their use.

Relationship To Self-Quantification

Orbital Oracle is related to self-quantification because the values returned from Divine API are treasured and respected by many. And have been for a long time. In other words, western natal astrology can be considered a form of quasi form of self-quantification. At least in the context of such a project.

With this post, I am trying to show how a person (represented with their birth time, date and location) can interact with ancient forms of self-quantification using modern technologies. Which is, regardless of what one thinks about the information source and subject, interesting and worth experimenting with. Especially when tied in with modern AI.